Spark RM - What is it?

Unicorn

Unicorn

By: Jesus Puente

SparkRM is a new Radoop operator - but not just any new operator to be added to the 70+ collection that the Radoop extension includes - it’s an operator that opens a wealth of new use cases for exploiting and analyzing Hadoop data with RapidMiner.

SparkRM is a meta-operator, which means that you can double-click on it and a new canvas is open where you can design a new process (similar to what you would find in the “Split Validation”, for instance). What’s special in SparkRM is that, even though it is a Radoop operator, the inner process has to be designed using non-Radoop, regular RapidMiner operators. And, whatever operator or sub-process one places inside SparkRM, they will be packaged and pushed to Hadoop for execution in a parallel way.

le. Let’s imagine you have a lot of text data in your Hadoop environment and you want to analyze it using RapidMiner’s Text Processing Extension. Well, now you can. You can read them and feed them into the SparkRM operator.

The data will be passed onto the non-Radoop sub-process inside. You can process, tokenize, create word lists, find expressions, n-grams, etc. and everything within the Hadoop cluster.

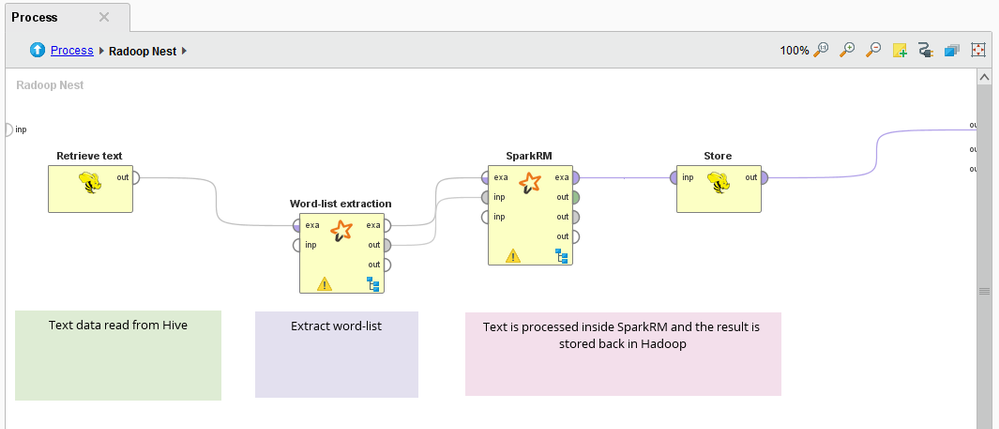

A typical process would look like:

SparkRM Process

SparkRM Process

And this is what you would have inside the SparkRM operator:

SparkRM Text Mining Extension

SparkRM Text Mining Extension

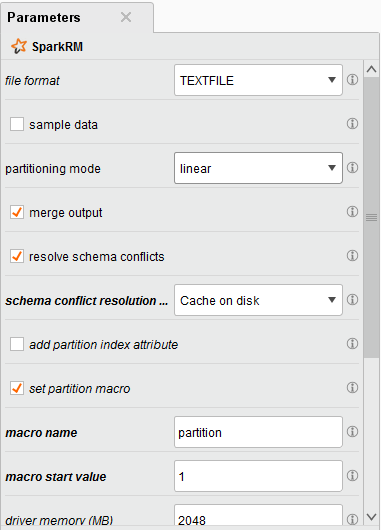

Some typical parameters of SparkRM include the file format (textfile or parquet) and the partitioning mode.

SparkRM Parameters

SparkRM Parameters

Once the task is finished, the result is returned as usual through the output ports. The first output port is for data sets, and it can be merged. If the data coming from the different partitions is consistent (same metadata), the operator simply appends everything together. If not, then there is an option to “resolve schema conflicts” and add the necessary missing values so that the full dataset contains all the information from all the partitions. This is especially useful when analyzing text, because the word-list of a certain text will not probably be the same as that of another.

I have described an example for text processing, but you can imagine any other extension or algorithm that’s not in Radoop: Series Forecasting, Deep Learning, Neural Networks, Process Mining, etc.