Moving data from RDBMS to Hadoop/Hive

bhupendra_patil

Administrator, Employee, Member Posts: 168

bhupendra_patil

Administrator, Employee, Member Posts: 168 - Many a times there is a need to move Data from relational databases to hadoop to start leveraging the power of Hadoop.

Depending on the use case it may be a one time effort or you may need to do this periodically. Rapidminer provides way to do this very easily using the Rapidminer Studio client and Radoop extension. This article will describe setting up a RapidMiner workflow to import data from Relational data store into Hadoop.

You can download the two products from here

https://my.rapidminer.com/nexus/account/index.html#downloads

or get in touch with us at https://rapidminer.com/contact-sales-request-demo/

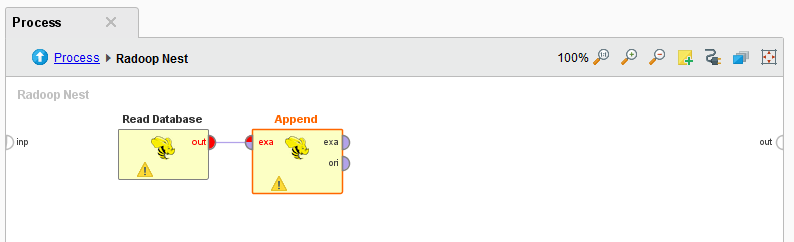

- Use the Radoop Nest operator and drag it into a new process canvas

- Configure the Radoop Connection ( The details for Setting up Radoop Connections are here http://docs.rapidminer.com/radoop/installation/configuring-radoop-connections.html)

- Provide table prefix(Table prefix are used by temporary objects, Temporary objects are automatically deleted in most cases)

- Double click on Radoop Nest operator

- Drag the "Read Database" operator from the Extensions>>Radoop>>Data Access>>Read group

- Configure the Read Database operator, it allows you to use a predefiend database connection, use jdbc url or jndi name for connection. You can build a query, use atablename or specify a sql file to define the source.

- Then connect the out port of the read database to "store in hive" operator

- You can use the store in hive configuration options to determinw how the data is stored, partitioned, if it should use external tables, customer storage and custom SerDe.

- The Store in hive operator also allows to drop first table if it exists.

- In case where you need to append to existing hive tables, use the Append to hive operator instead.

/

/

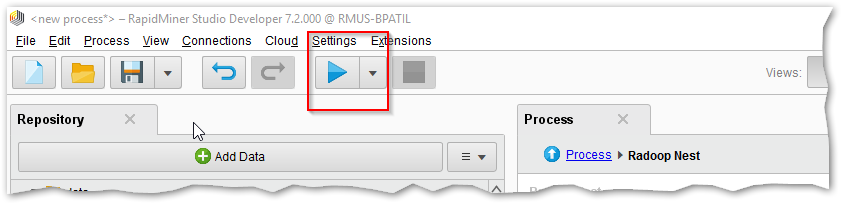

- To run the process now, you can hit the blue play button at the top

- You can also schedule the process to run if you have RapidMiner server installed and configured.

You can absolutely add more than one of these read- store pairs like seen below.

Sometimes there may be a need to do some data prep before it is actually stored. You can build those workflows easily with RapidMiner as seen in screen shot below

You can download the two products from here

https://my.rapidminer.com/nexus/account/index.html#downloads

or get in touch with us at https://rapidminer.com/contact-sales-request-demo/