Jackhammer Hacks - Improve your process design with the Jackhammer Extension

land

RapidMiner Certified Analyst, RapidMiner Certified Expert, Member Posts: 2,531

land

RapidMiner Certified Analyst, RapidMiner Certified Expert, Member Posts: 2,531  Unicorn

Unicorn

If you are an experienced user of RapidMiner, chances are that you already noticed that RapidMiner is a golden hammer. Whatever kind of problem in the area of Data Science you are facing, the probability is high that you can create a solution with RapidMiner from the top of your head. And often enough the harsh project reality shows that a golden hammer is direly needed. The only alternative to a golden hammer would be to use hundreds of different tools, but, figuratively speaking, they would make your tool belt so heavy that you will only be able to crouch through your project.

And sometimes you not only need one golden hammer, but many of them: Many standard projects nowadays involve a amounts of data that does not yet justify the usage and overhead of real Big Data technologies, but still requires a special approach to be executable on commodity hardware in memory. This is exactly where our extension fits in: It will turn your golden hammer RapidMiner into an easy to use, golden Jackhammer, hammering away a lot of your daily grieves.

In the following sections we will outline a few areas where the extension will help you. We will close this article with an exhaustive list of operators. From time to time we want to come back, update the list as we are constantly improving the extension.

Project Management

In RapidMiner you organize your projects in repositories that you are free to structure as you like. From years of experience with seeing a lot of bad ideas, we at Old World Computing have developed a standardized best-practice for repository design. We believe that avoiding unnecessary diversity helps focusing on the real problems and passing a standardized project structure to a fellow colleague also makes it much easier for him to understand what is where...

Another advantage of this standardized layout is that you can automate several tasks that become important in productive projects:

- Automated storage of results without confusing different processes' results or entering path and macros every time

- Continuous integration tests for all processes to ensure compatibility across RapidMiner versions or infrastructure updates

- Deployment from a central development server to a star shaped staging infrastructure possibly with different servers per project

However, beside the standard structure you need tool support to implement that. The Jackhammer extension brings new operators that allow you to solve these tasks. We found, that another important point for larger scale projects, especially if involving a full team of data scientists, is to ensure the re-usability and control of processes. So we added specific operators to handle

- exceptional situations with better control than the built in functionality. It supports delayed re-tries to cope with situations where parts of an infrastructure may be unreachable temporarily

- situations where you want to either stop a process entirely, but not erroneously, or just skip a specific loop iteration

- debugging of reusable library processes in a comfortable way

- undetermined execution order within processes without introducing an unnecessary sub process level

Ease of use

Ever copied a set of operators to the beginning of a process? Ever sweared about having to shift the entire process AND all notes to have a nice, readable process design?

Seems to be tiny but once you got used to it, you will never want to live without the Shift Operator action:

Insert or paste a set of operators, mark it, select Shift Operators or press the hot key, and the remaining process will be shifted automatically to clear the space for the new operators. This includes all notes added to the process! After you inserted additional operators

After you inserted additional operators

After selecting and choosing to Shift Operators

After selecting and choosing to Shift Operators

Of course there are also multiple operators included making life easier in many situations. A very common pattern is to generate data randomly and then discarding all generated data just to get an empty example set to initiate loops, etc. Our extension now provides an easier to read, one operator solution. Same for generating number sequences, group index sequences, etc.

Other operators allow the same operation as already present in core RapidMiner, but now for multiple attributes at the same time, saving the complexity of loops.

Caching

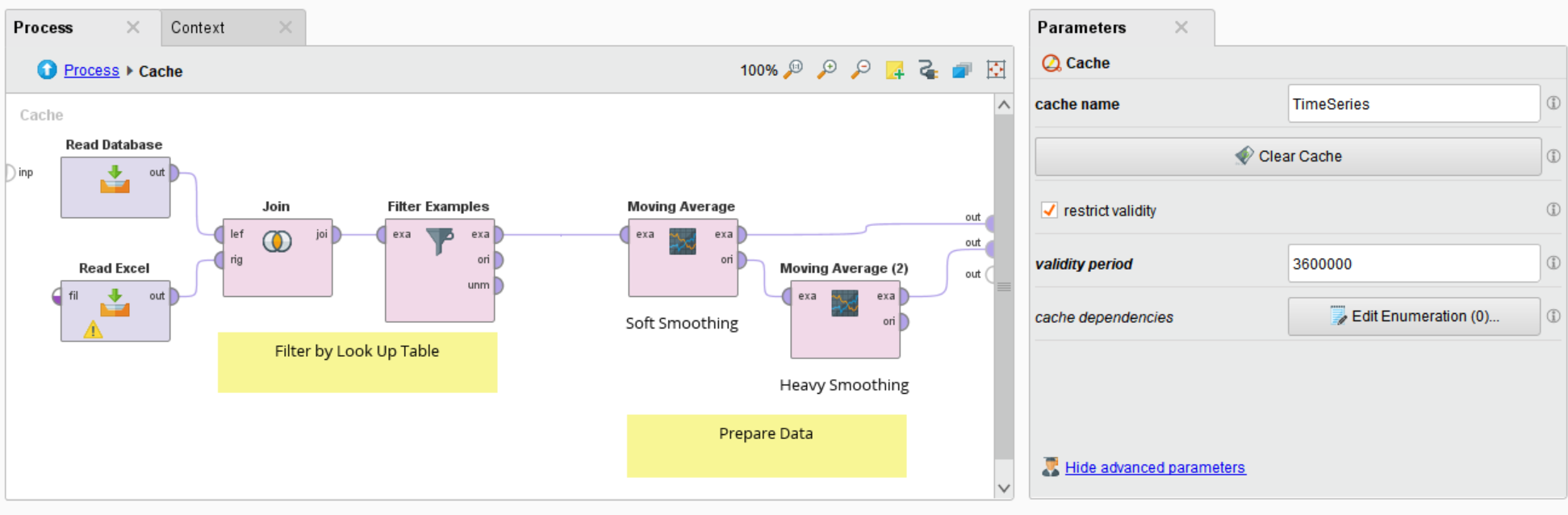

The Jackhammer's caching functionality can be useful in many situations, as well during development as deployment. Let's imagine you have external resources that take a long time to load like for example large databases being queried by your process. Especially during the modelling phase of a project you will need to load the data very often while it doesn't change at all. With the new operators you can simply put a cache operator around the part of the process loading and transforming the data. The cache operator will store the results of the subprocess in memory and if too big on a local disk. If the process is re-executed the cache operator will check if the cache is still valid or if the generating process has been changed. In case it is still valid and not too old, the cached version is returned immediately and the process continues.

Caching Subprocess with slow database query, filtering by external data and preprocessing. Runtime reduced from 3 minutes 42 seconds to 14 seconds

Caching Subprocess with slow database query, filtering by external data and preprocessing. Runtime reduced from 3 minutes 42 seconds to 14 seconds

While this allows you to concentrate on the modelling by eliminating unnecessary waiting times, it becomes even more valuable in a deployment scenario. Let's assume that you are doing two queries from different databases for a process monitoring some machines. The one database contains the new signals being sent from the machines, while the other one contains meta information for this particular machine that is relatively stable over time. You can avoid the additional runtime caused by the second query by putting it into a cache. The cache can be parameterized to be sensitive to process variables / macros, so that it will store different results for different machines. And if the process time is not important to you, well, it's most probably important to your database admin.

Especially in a web service deployment that can be called thousand of times per minute, you do want to eliminate anything that delays the execution. That not only includes database access but also any access on the repository (and hence into the underlying database). If you have a scoring process with a large model like a k-NN or SVM, you save a lot of time and database load with placing it within a cache operator. As it is time restricted, any update of the model will be reflected after a limited time or you can use one of the cache control operators to empty the cache when a new model is trained.

If you are creating a web application with RapidMiner server, the caching becomes invaluable to improve responsiveness, too. For that particular use case it is possible to access the cache filled in one process from also different processes while ensuring user rights being obeyed.

More flexible Loops

The Jackhammer extension contains an additional set of operators for flexible loops. In contrast to the ones from the RapidMiner core they do not suffer from compatibility requirements to older versions. Hence they not only all share the same design principles so that all have the same set of ports, but they also contain every necessary port to avoid any complex setup. Additionally they can be controlled from within to prematurely terminate the loop or skip single iterations. They also all support parallel processing, speeding up the calculations especially for larger data sets. They also allow to process more data in memory by processing it in chunks.

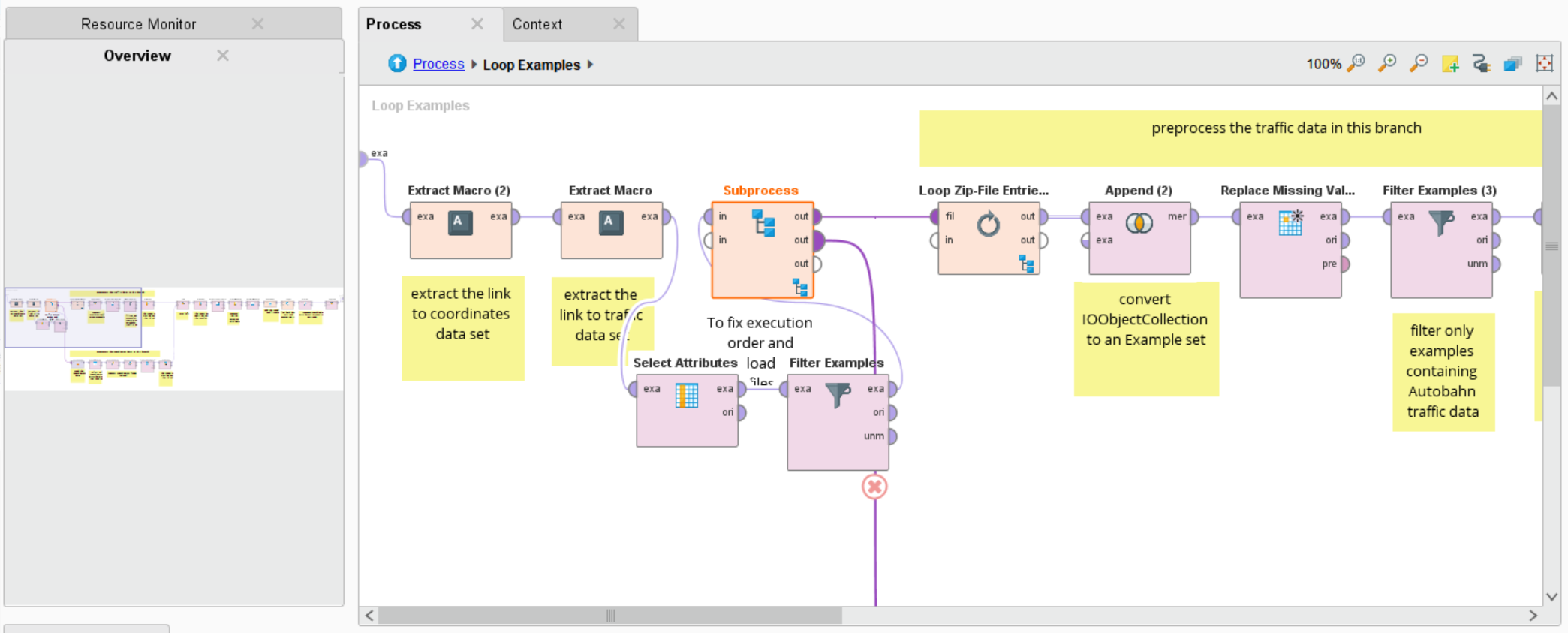

Every loop operator has input ports on the outside. What is delivered there will be forwarded to the inner input ports of the subprocess and remains there as copy at every iteration. If a loop continuously works on one object and changes it at every iteration, this object can be forwarded to one of the loop output ports. Their results will be forwarded to the loop input ports in the next iteration. As this usually requires an initialization, e.g. passing an empty example set, the loop input ports are initialized with the input ports' objects in the first iteration. Results are collected for the inner output ports and forwarded for the inner loop results. Both are available on the outside. Inner and outer Ports of one of the loop operators

Inner and outer Ports of one of the loop operators

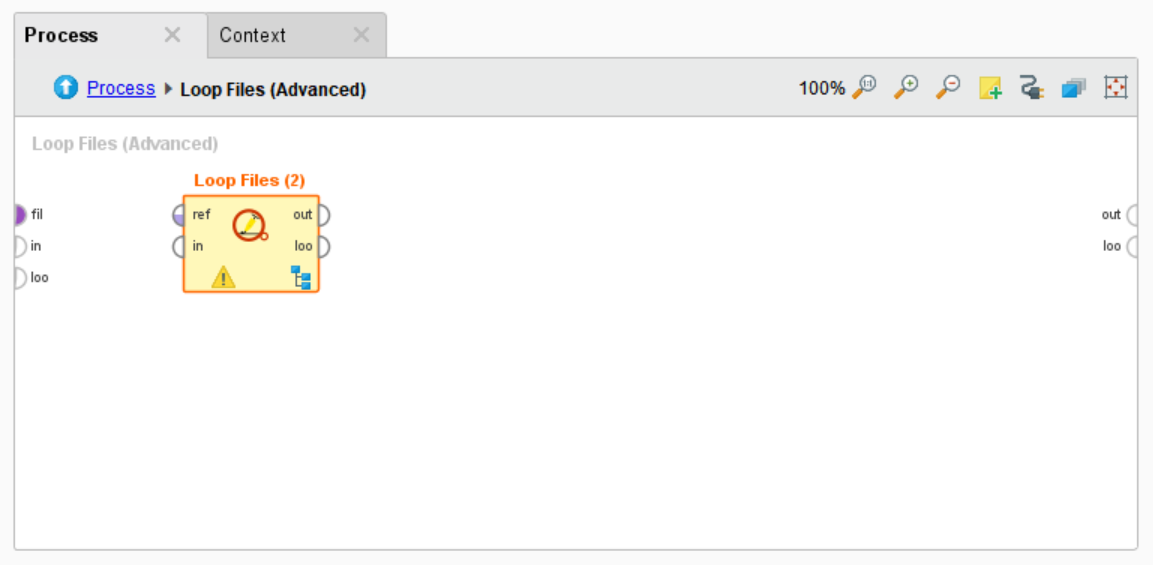

Additionally the loops may have an optional port for their specific purpose. E.g. the Loop Files (Advanced) Operator can receive an ExampleSet containing file names. It will loop over them, instead of searching a parameter specified directory.

The following looping operators are available. All Advanced versions duplicate the core functionality and add the features as described above:

- Loop (Advanced): Same as core Loop

- Loop Repository (Advanced): Same as core Loop Repository, but allows to access Processes from repository (and hence allows Meta Programming)

- Loop Files (Advanced): Same as core Loop Files

- Loop Batches: Processes a given data set as batches of specifiable size. Allows for parallizing entire data preprocessing parts or for shrinking size of parallely processed data to fit into main memory

- Loop Groups: Processes subsets of the data belonging to the same group. Group is identified by the same values of a number of attributes. Group identifiers can be automatically extracted as process variable / macro.

- Loop Index: Processes every entry of an indexed object collection (see below). Current index values are provided as process variable / macro.

- Loop Remote Files: Same as Loop Files (Advanced) but loops over remote file connections as ftp/sftp/ftps

- Read CSV (Batchwise): Iteratively reads in a batch of rows from a csv file, processes them and continues with the next data. This allows to process arbitrary large csv files e.g. to import into a database. May be multithreaded to speed up if preprocessing takes a long time.

Indexed Collections

Collections in RapidMiner are very useful in situations where you repeat a particular task several times. In a monitoring scenario we might have hundreds of machines in dozens of factories, in a churn related application we might have thousands of customers, ...

It is a standard procedure to loop over the particular entities we are analyzing. Usually you either store the results for each entity in RapidMiner's repository or if you output data, you append the resulting collection. Both approaches have draw backs in very large settings with thousands of entities: If you store too many entries in the repository, it will become slow and as well management as deployment becomes a hell due to a large per entry overhead. If you combine data in one large table and you just want to access the data for a particular entity, you need to load all and filter all, which is quite inefficient unless you go through external infrastructure like a database. The later approach unfortunately only applied to data and not to complex objects like prediction models.

Our indexed collections solve both tasks: like in the standard collections you can simply collect all objects with them, but instead of linearly chaining them, you need to assign one more more keys to each added objects. These values can be used to later retrieve the object again. So if your entities have identifiers like factory place and machine id or customer number, you simply get the entry for these values. This way you only need to store one object which is way more efficient. If loading is eliminated with a Cache operator the access is instantaneous so that you don't need to filter again. Indexed Model for different items in different stores. Each model is a combination of a preprocessing and a linear regression model

Indexed Model for different items in different stores. Each model is a combination of a preprocessing and a linear regression model

The extension also contains a special operator to loop over these collections with the key's being made available as process variable / macro. This can be nicely used to report on model performance for every single entity, etc. Beside the loop there are also single operators to access the index for single objects, which can be helpful in web service or web app deployment.

Summary

The Jackhammer extension contains a huge set of operators, that address different parts of the daily life of a data scientist.

You can simply download it over RapidMiner Studio's marketplace integration or manually via this link: https://marketplace.rapidminer.com/UpdateServer/faces/product_details.xhtml?productId=rmx_toolkit

Several of the operators are actually completely free. Some of them are limited in functionality in demo mode. Please see our pricing for details how to purchase us. Feel free to reach out to us if you have any question.

And last but not least, here's an extensive list of the contained operators:

Process Control

- Extract Macros from Example

- Execute Process in Background

- Execute Process

- Stop Process (Graciously)

- Handle Exception (Advanced)

- Determine Order

Caching

- Cache

- Retrieve Cache

- Clear Cache

Collections

- Combine Indexed Objects

- Extend Indexed Object

- Select by Key

Loops

- Loop (Advanced)

- Loop Batches (Advanced)

- Loop Groups (Advanced)

- Loop Index

- Loop Remote Files

- Loop Files (Advanced)

- Loop Repository (Advanced)

Loop Control- Skip Iteration

Validation

- Cross Validation (Advanced)

Testing

- Assert Equality

Data Access

- Read Matlab

- Store Result

- Open Remote File

- Write Remote File

- Delete Remote File

- Move Remote File

Loops- Read CSV (Batchwise)

Generation

- Generate Sequence

- Generate Sequence Data

- Generate Group Sequences

- Generate Group Indices

- Generate Empty Data

Modeling

- Indexed Model

- Combine Indexed Models

- Extend Indexed Model

Transformation

- Nominal to Date (Advanced)

- Discretize by Specification Data

- Sort (Advanced)

- Union (Advanced)

- Set Minus (Advanced)

- Difference

- Intersect (Advanced)

Series

- Resample Series

- Resample Multiple Series

Graph

- Extract Tree from Repository

- Tree to Data (relational)

- Data to Tree (relational)

- Data to Tree (wide)

- Tree to Data (wide)

- Select Descendants

- Select Ancestors

Comments

great article @land and great functionality for RM Studio users.

@land had me at caching!