Splitting text into sentences

bhupendra_patil

Employee, Member Posts: 168

bhupendra_patil

Employee, Member Posts: 168 Rapidminer provides powerful text mining capabilities to work with unstructured data. Unstructured data comes in various format e.g short comments like tweets which can be analyzed in its entirety or long documents and paragraphs which sometime need to be broken into smaller units. The following articles provides techniques to split longer texts into smaller units of sentences. but the general concepts can be used for other use case too

Please find attached zip file containing working example that go along with the content here.

The extensions we are using in this example are

- Rapidminer Text Mining Extension (Download from marketplace or here)

- Rapidminer Web Mining Extension (Download from marketplace or here)

The data we are using in this example is a RSS feed of google news for S&P 500 index.

After dropping the columns except the Content and Id, the data looks like. As you will notice the data is also HTML rather than simple text, so we will need to handle it too.

Now lets look at the two possible ways to work on this split into sentences use cases

Now lets look at the two possible ways to work on this split into sentences use cases

Method 1 (Using Tokenize into Sentences)

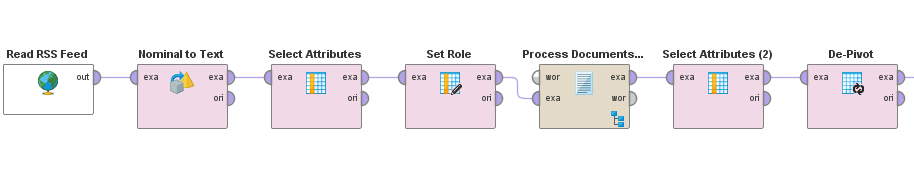

This method uses Rapidminer Tokenize operator to split into sentences. The actual process looks something like this.

As with most Rapidminer text processing the core logic happens inside the "Process Documents from Data"

Here is what the inside of "Process Documents" looks like

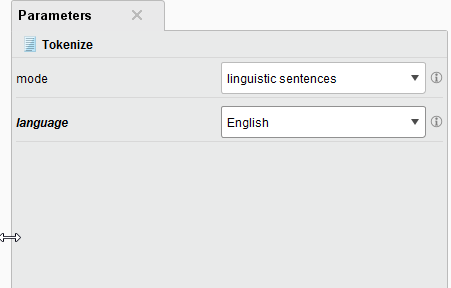

here is how the Tokenize settings look like

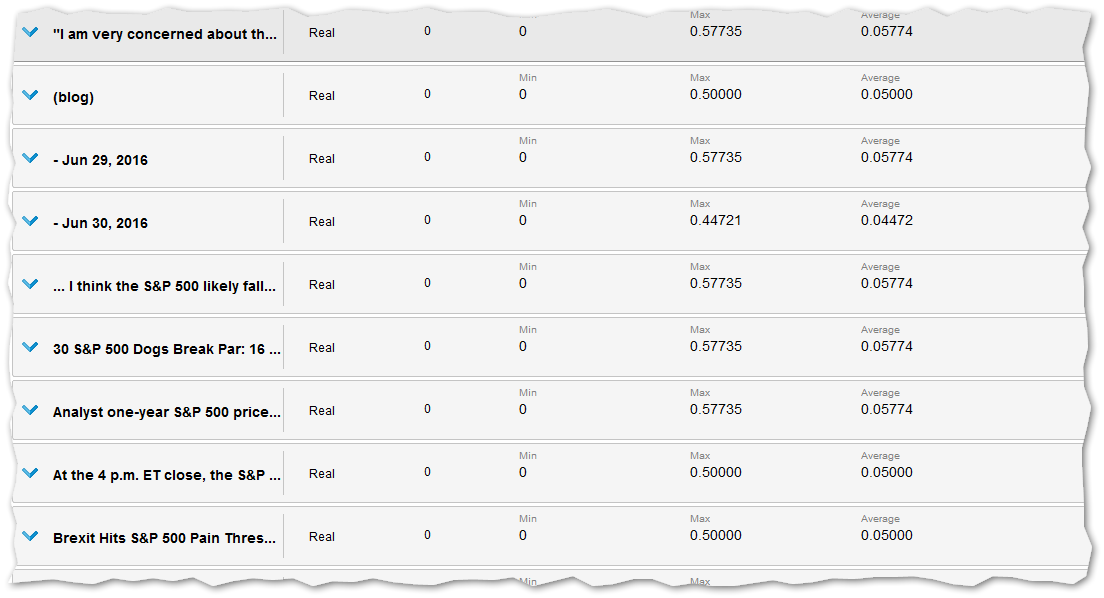

The output of this step will be new columns with each sentence in each of the documents.

At this point you have successfully split each HTMl document into sentences.

Depending on your needs this may be good, or additionally you may be able to use Rapidminer data prep capabilities to convert this to other formats as needed. The example provided use the De-Pivot Operator to arrange all the sentences into one column that cna be used for further processing.

Method 2 (Using "Cut Document" operator)

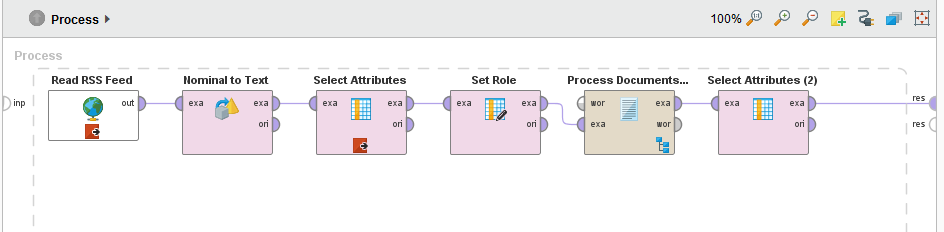

The top process will look something like this.

The inside of "Process Documents of Data" looks like below

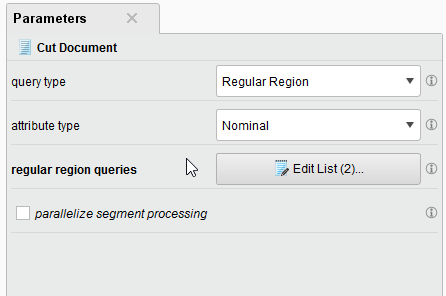

The settings for the cut document looks like below

The settings for the cut document looks like below

The regular region queries we are using are as below, It splits sentences not only based on the full stop, but also on some common conjunctions.

The output of this process witl look something like this.

Also an additonal advantage to this method is it allows more control on how the documents are split and also using the ID you can see the origin of the individual fragments.