The RapidMiner community is on read-only mode until further notice. Technical support via cases will continue to work as is. For any urgent licensing related requests from Students/Faculty members, please use the Altair academic forum here.

Logistic regression with and without regularisation

hi all,

I have a classification case, wherein I use Logistic regression.

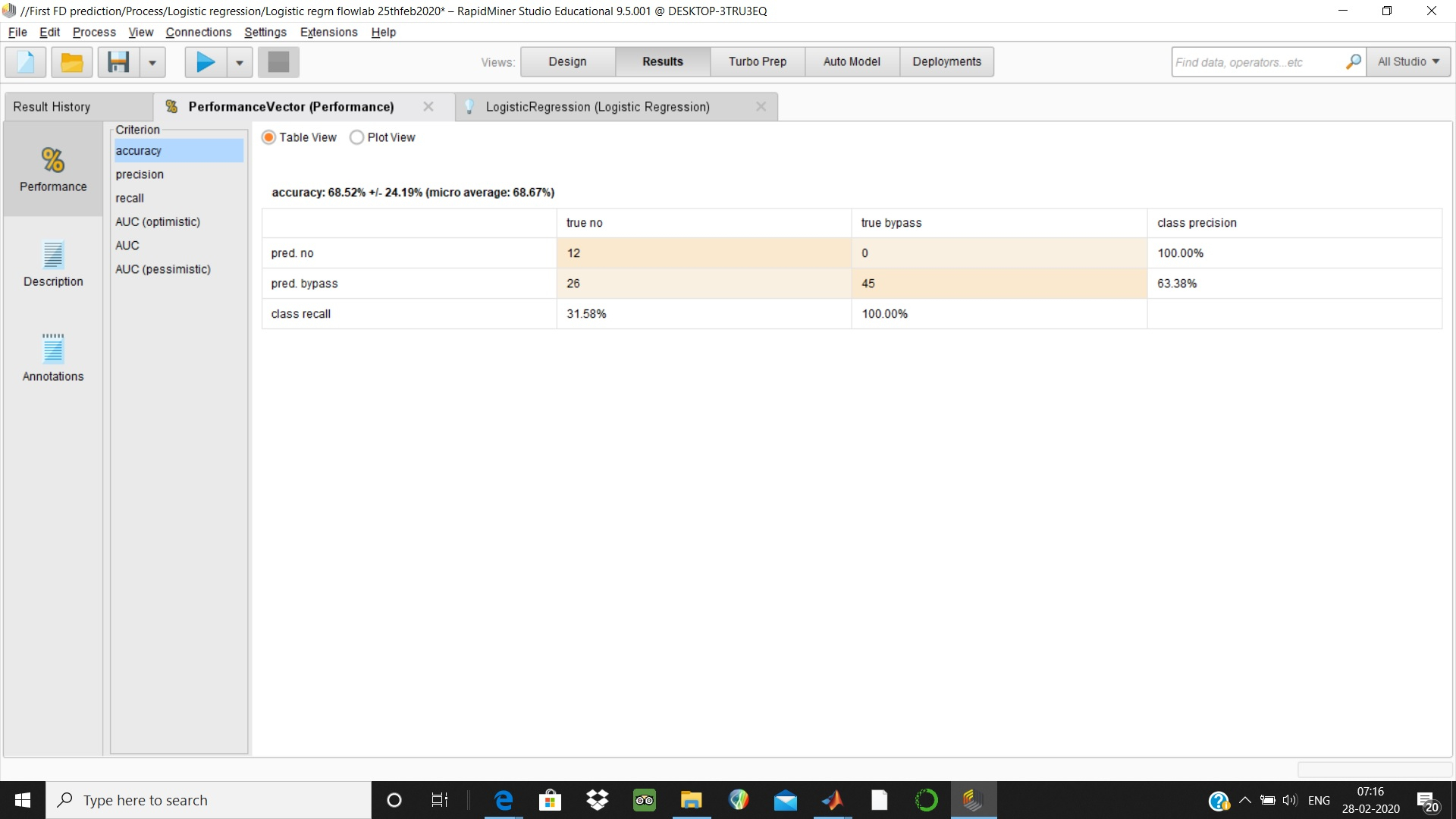

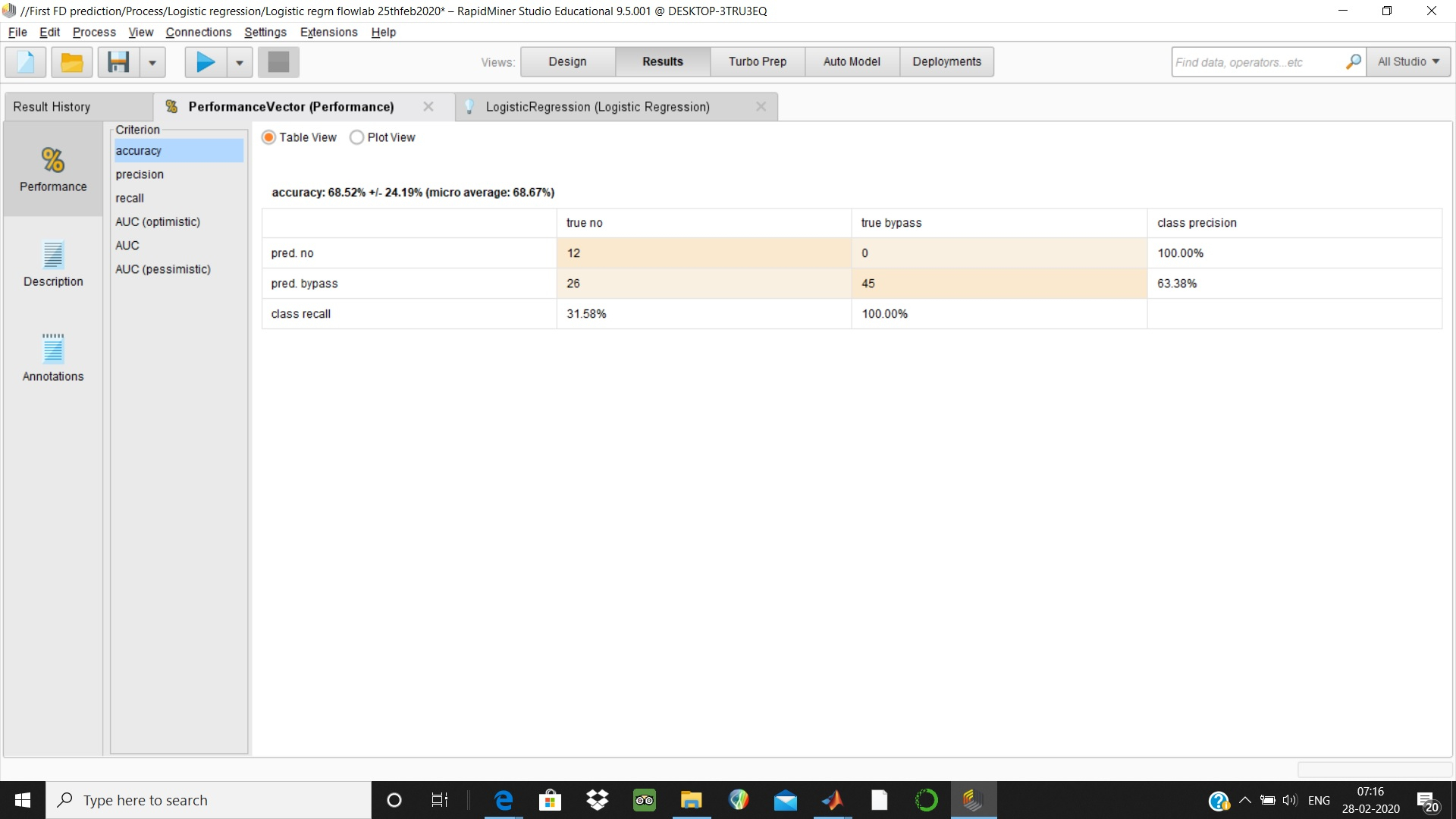

At first instant - I get accuracy of 68.52% with 100% recall.

subsequently with regularisation - I get accuracy of 98.77% with 100 % recall.

1. can you elaborate , how regularisation leads to this much jump in better accuracy. can you explain the basics behind this rapidminer option.

2. I couldn't see lamda value in result. Is there any way to get it displayed.

3. Both the cases , I get 100% recall. ( zero false negative, which is desirable in my case.).

But Im not sure, whether it is a good model.

I achieved above after normalisation and cross validation. Im enclosing

both results. thanks

regds

thiru

I have a classification case, wherein I use Logistic regression.

At first instant - I get accuracy of 68.52% with 100% recall.

subsequently with regularisation - I get accuracy of 98.77% with 100 % recall.

1. can you elaborate , how regularisation leads to this much jump in better accuracy. can you explain the basics behind this rapidminer option.

2. I couldn't see lamda value in result. Is there any way to get it displayed.

3. Both the cases , I get 100% recall. ( zero false negative, which is desirable in my case.).

But Im not sure, whether it is a good model.

I achieved above after normalisation and cross validation. Im enclosing

both results. thanks

regds

thiru

Tagged:

1

Best Answers

-

varunm1

Member Posts: 1,207

varunm1

Member Posts: 1,207  Unicorn

Hello @Thiru

Unicorn

Hello @Thiru

Regularization is used for better generalization. It does not always improve results as some times the increased bias dominates the reduced variance. In your case, the reduction in variance might have dominated the bias increase (due to regularization) and gave a better result. So, in simple words, your model might have overfitted without regularization and gave less performance on test data.I couldn't see lamda value in result. Is there any way to get it displayed.Did you check the "Description" tab of the logistic regression model? Lambda value is there. Is this what you were asking? Both the cases , I get 100% recall. ( zero false negative, which is desirable in my case.).But Im not sure, whether it is a good model.

Both the cases , I get 100% recall. ( zero false negative, which is desirable in my case.).But Im not sure, whether it is a good model.

Actually the 100% recall you were observing is only for 1 class (positive class), you need to check recall for both classes. If a model is blindly classifying all the observations as a positive class, then also recall will be 100%. Take a trade-off between all performance metrics (AUC, accuracy etc.). Please let us know if you need anything else

Just my 2cRegards,

Varun

https://www.varunmandalapu.com/Be Safe. Follow precautions and Maintain Social Distancing

8 -

varunm1

Member Posts: 1,207

varunm1

Member Posts: 1,207  Unicorn

Your model fit is dependent on lambda values. When you select a high lambda value, the model complexity reduces and it becomes simple. One issue with high lambda is related to underfitting. The model might not capture all information from training.

Unicorn

Your model fit is dependent on lambda values. When you select a high lambda value, the model complexity reduces and it becomes simple. One issue with high lambda is related to underfitting. The model might not capture all information from training.

Similarly, if your lambda is too low then your model might be complex and over fit the data. Overfitting will reduce model generalization.

Both under fitting and overfitting have negative influence on performance. This is the reason you should find a lambda that balances both simplicity and fit.Regards,

Varun

https://www.varunmandalapu.com/Be Safe. Follow precautions and Maintain Social Distancing

7

Guru

Guru

Answers

thanks for your reply. can you elaborate the role of lambda & how it changes the performance of the model. thanks.

thanks for your reply.

regds

thiru